NVIDIA Pascal GP100 GPU Expected To Feature 12 TFLOPs of Single Precision Compute, 4 TFLOPs of Double Precision Compute Performance

Benchmarking floating-point precision in mobile GPUs - Graphics, Gaming, and VR blog - Arm Community blogs - Arm Community

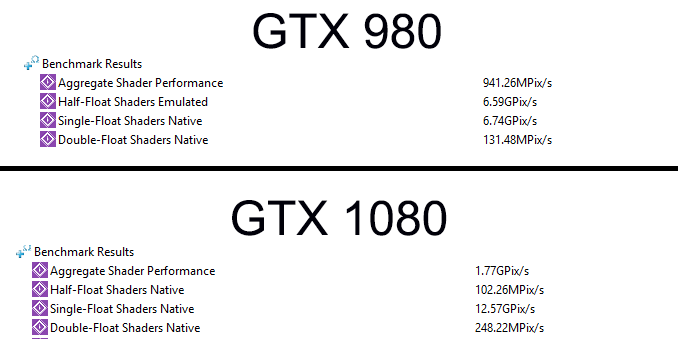

FP16 Throughput on GP104: Good for Compatibility (and Not Much Else) - The NVIDIA GeForce GTX 1080 & GTX 1070 Founders Editions Review: Kicking Off the FinFET Generation

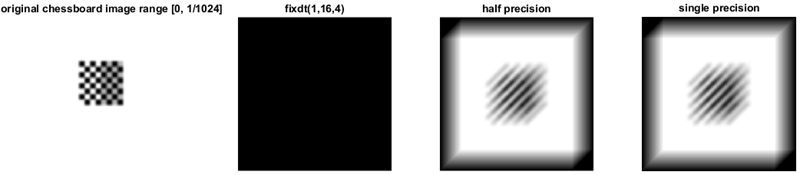

![PDF] A Study on Convolution Operator Using Half Precision Floating Point Numbers on GPU for Radioastronomy Deconvolution | Semantic Scholar PDF] A Study on Convolution Operator Using Half Precision Floating Point Numbers on GPU for Radioastronomy Deconvolution | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/f93daead83667c2173c0814f6479c2b9aa686770/4-Figure4-1.png)

PDF] A Study on Convolution Operator Using Half Precision Floating Point Numbers on GPU for Radioastronomy Deconvolution | Semantic Scholar