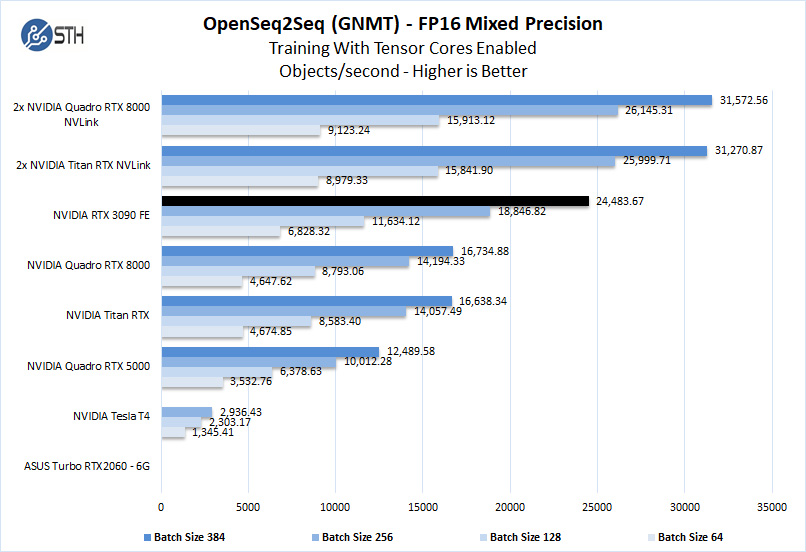

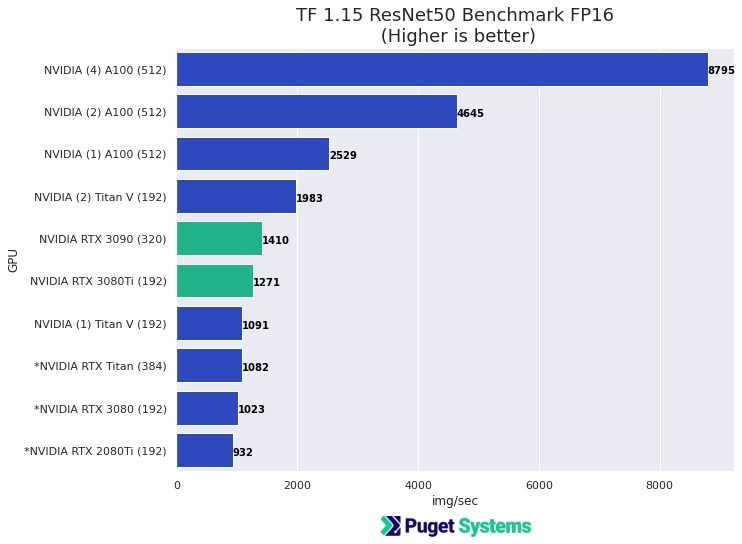

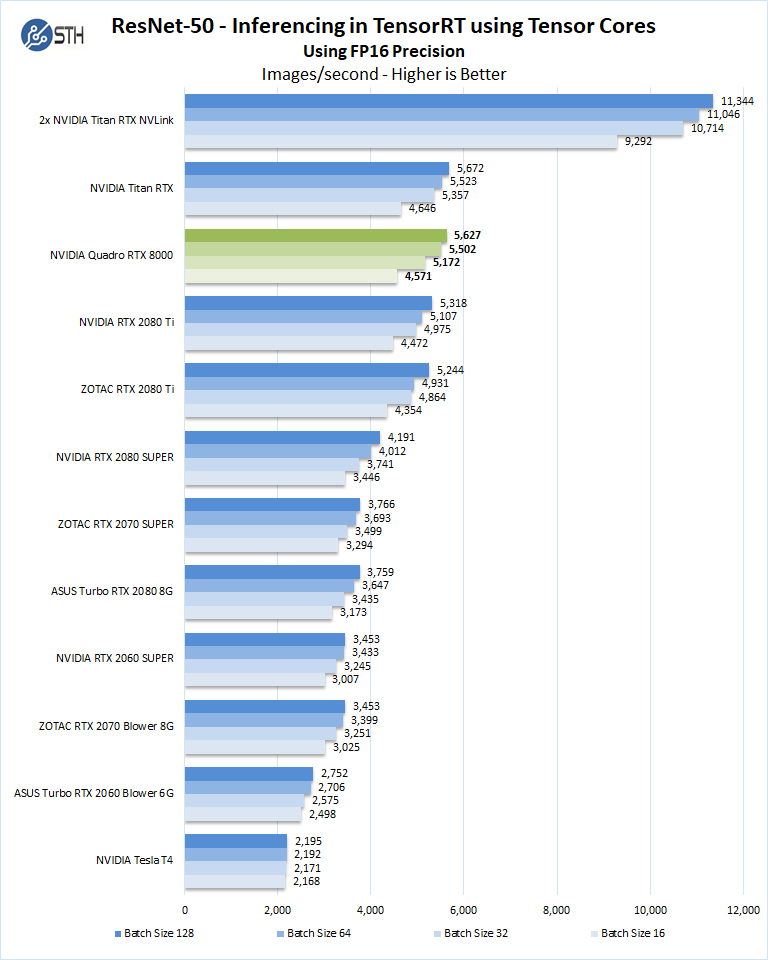

TensorFlow Performance with 1-4 GPUs -- RTX Titan, 2080Ti, 2080, 2070, GTX 1660Ti, 1070, 1080Ti, and Titan V | Puget Systems

NVIDIA's 80-billion transistor H100 GPU and new Hopper Architecture will drive the world's AI Infrastructure - HardwareZone.com.sg